Available now on visionOS, and rolling out slowly for iOS/iPadOS, is Callsheet version 2026.4. The changelist is not long, but it is mighty:

New Features

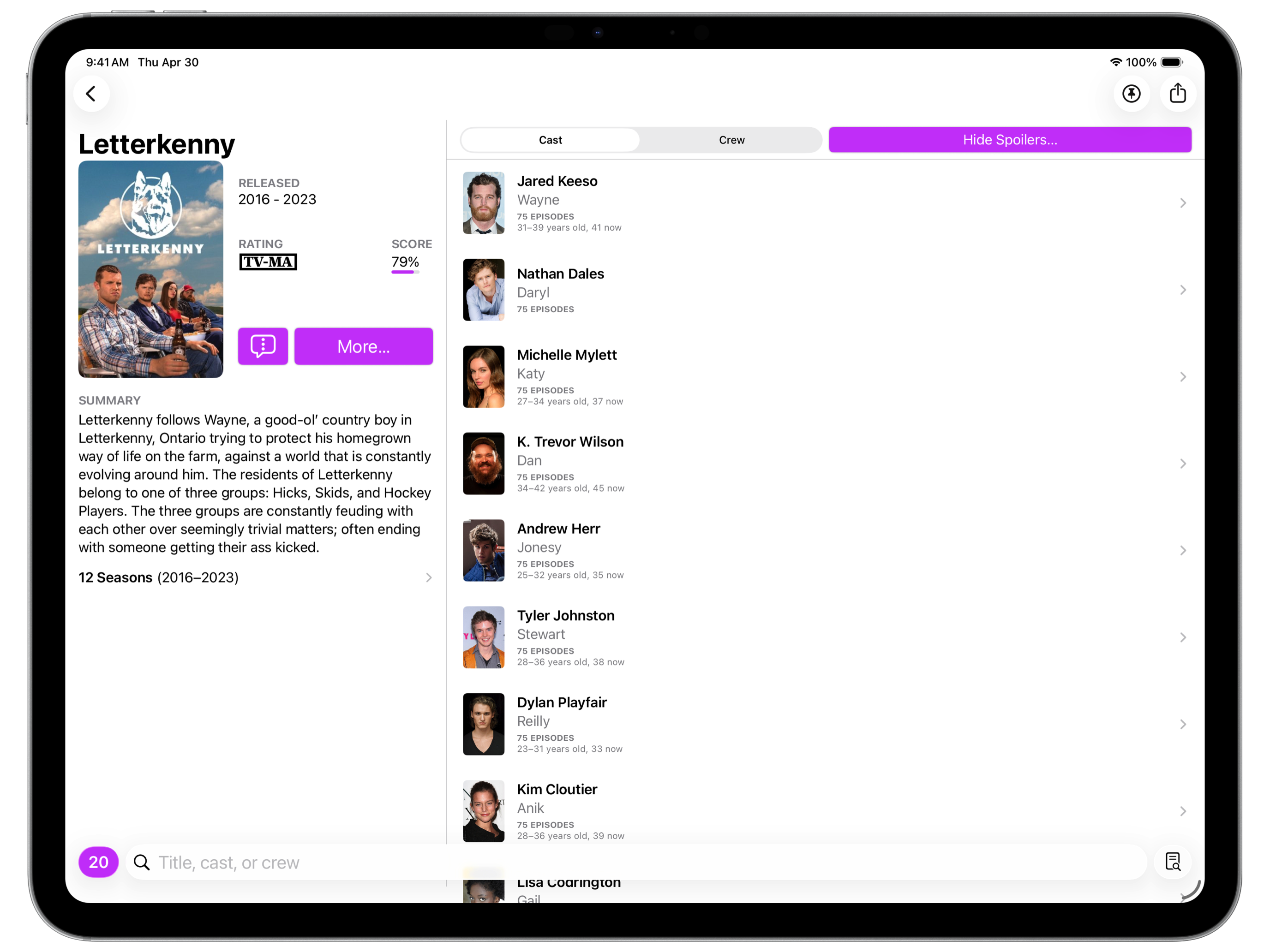

- All-new iPad landscape layout that makes far more efficient use of the space. This also applies to macOS and visionOS.

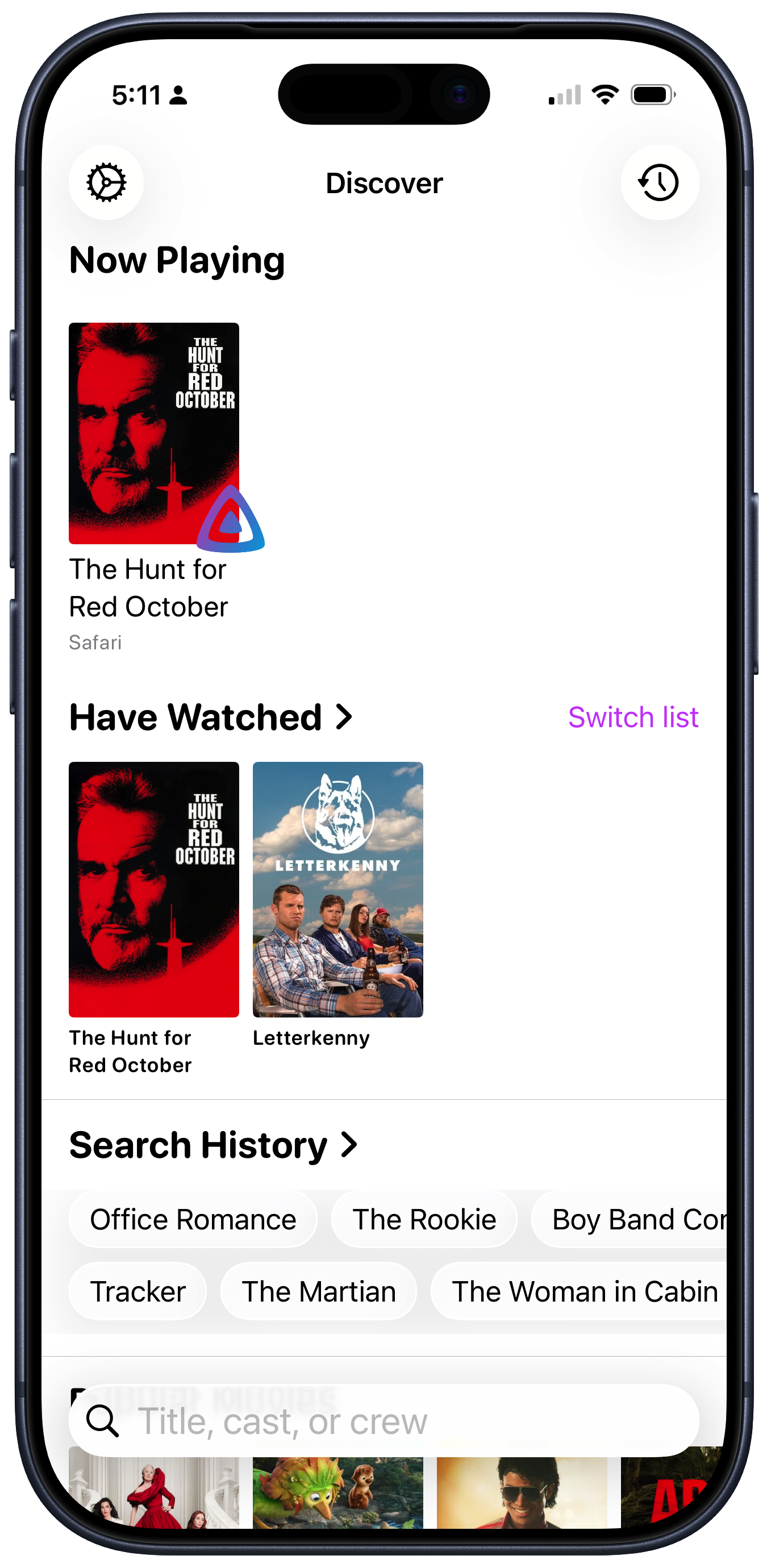

- Support for Jellyfin in the Now Playing view. Note that this does require authenticating with your Jellyfin server; see the Callsheet website for details.

- Added ability to copy a person’s name or their role/job via context menu

- Localized the “Johnny Appleseed” and “Botanist” example name/job shown in Spoiler Settings. I tried to make these fun but if I missed the mark, let me know!

Fixes

- “Hide Spoilers…” button allows tapping in the whole button, rather than just where the text is

- No longer truncate titles/names in the Discover screen whenever possible

- In rare cases, using the chevron to page between movies in a collection could fail

I wanted to discuss two of these new features.

iPad Landsape Layout

For better — but perhaps mostly for worse — I think of Callsheet as

an iOS app. I develop for the iPhone, with all other platforms getting varying

[small] amounts of my attention. I don’t say this with pride, but rather to

excuse explain why it is the iPad layout was so janky for so long. I just

kept having other, bigger, fish to fry.

In addition to prioritizing other things above redesigning the iPad layout, I also… wasn’t sure what to do. I went through a few ideas, but they all died on the vine. Some were too difficult to implement because they broke assumptions that SwiftUI makes about the way you use SwiftUI controls. Some were reasonably easy to implement, but I found they didn’t really improve the way the app felt.

Where I eventually landed was to switch the landscape layout to a sorta-kinda two pane layout. The left side has what I had previously considered the “above the fold” content; the right side has the cast/crew lists.

I feel like this works pretty well, and still feels like Callsheet. I may refine it more in the future, but I’m way way happier with how this feels and works than I was with the embarrassment that preceded it.

Jellyfin Support in Now Playing

When I first started integrating Plex into Callsheet — Callsheet can show what was currently playing on a local Plex client — I was convinced that doing any sort of authentication/login/authorization/whatever flow was too onerous for a user. I wanted to limit myself only to what could be discovered without any sort of logging in to anything.

I continued this self-imposed rule when integrating with Channels.

Over the last year or so, I’ve become ever-less-enthusiastic about Plex. While my needs from Plex have not changed at all, their interest in serving those needs has diminished dramatically. As such, I’ve long considered if I should find an alternative.

This is also exacerbated by Plex using an absolutely bananas and deeply unreliable mechanism for sharing playback information. It almost never works, and I sorta regret even including it in Callsheet.

A couple months back, I finally gave in, and installed Jellyfin, which is running as a peer of Plex on the same Mac mini.

I’m not here to review Jellyfin in this post, but the ultra-short version is, it’s pretty great, as long as you don’t need to share your content with anyone, and as long as you find a third-party player you like. (For me, I really like SenPlayer.)

Now that I’m using Jellyfin for the consumption of non-ephemeral media, I wanted to see that information in Callsheet. So, I started down the path of seeing what I could glean from my Jellyfin server without having any sort of login dance.

That expedition ended quickly. In failure. Jellyfin doesn’t offer any sort of now playing information if you don’t have some sort of authentication with the server.

However, I was able to discover a pretty reasonable alternative.

Jellyfin has the concept of Quick Connect, in which the user flow is:

- The user specifies the server URL

- The client app gives the user a six-digit Quick Connect code

- The user enters that code on the Jellyfin management website

(I should note the client app flow is a fair bit more complicated, but well within reason.)

While far from auto-discovery, this is far from onerous.

So, that’s what I’ve done.

In Callsheet, if you go to Settings → Persnickety Preferences →

Integrations, there’s a section for Jellyfin. You can enter your

server URL, get a Quick Connect code, and then open a web browser

right there to enter that code in your server’s administration panel.

In principle, this dance should only need to happen once; Callsheet will retain the connection to Jellyfin for as long as your server allows.

Interestingly, if you’re a Tailscale person, and you enter

your 100.x.y.z or x.ts.net address as your server, the Jellyfin

integration is actually the only one of the three that works remotely.

Plex and Channels only work if Callsheet is on the same network as the

playback client.

I’m pretty proud of both of these features, but if you have issues with

either, please feel free to use the in-app feedback feature in Settings

to send me thoughts/questions/complaints/etc. Using the in-app feature

will automatically include a log file that is often helpful in diagnosing

issues.

Last week was spring break, which included a brief trip to show my family my old stomping grounds. As such, I didn’t have a chance to call out this fun appearance I made on The Verge’s and David Pierce’s excellent newsletter, Installer.

My two home screens were featured; as mentioned here a while ago, I have started using only two, and searching for anything else I may need.

I’m really honored to have been asked; I’m also pleased with how it turned out. 😊 If you’re not an Installer subscriber, you should be. David’s got an incredible knack for finding and surfacing cool stuff.

An exceptionally odd thing happened for me this week: my work became the subject of others’ work. My work was the subject of a history lesson.

A while back, Robert McGinley Myers interviewed me as a part of a piece he was producing for his show, Phonograph. I knew he was looking into podcast advertising, and had a keen interest in the toaster reviews, but wasn’t 100% sure where this was all going to end up.

I listened to the episode while we had a bit of downtime during a brief spring break trip earlier this week, and it’s excellent. I wasn’t sure how Robert and his co-host Britta were going to weave a story around… podcast ads (!)… but they did. And it’s a really great listen. I can’t recommend enough that you give it try.

I am incredibly proud of the work that I do with Marco and John, as well as Myke. I’m impossibly lucky to be able to do this as a vocation. But it’s a whole new feeling to find ATP as the subject of someone else’s history lesson. I’m not entirely sure what to make of it. I’m flattered, of course, even though I mostly play an accessory role in this particular lesson.

But it also puts a mirror in front of me, reflecting how long I’ve been doing this. Listening to John review toasters feels like it was not that long ago, but in actuality, it was over a decade ago. John started the toaster reviews in February of 2015. Declan was still an infant, and Mikaela wouldn’t be born for almost three more years.

As I sit here now, they’re 11 and 8 years old.

I’ve had to reckon with the passing of time quite a lot recently due to some challenges in my personal life[1], and this is another example. It makes me proud that my work is worth examination, but also makes me scared: is this a subtle hint that I’m already past my prime? Is my best work already behind me? Ten plus years behind me?

I sure hope not. And I sure hope I get to keep doing this crazy job with three of my best-est friends for a whole lot longer.

Everything is fine, broadly, and way more than fine with the kids, Erin, and me. 😁 In summary, getting old sucks, but as the old adage says, the alternative remains worse. ↩

I had the absolute pleasure of joining my pals Dan, Mikah, and Bryan on Clockwise.

On this week’s episode, we discussed the likelihood of full self-driving ever really landing — which has already proven fortuitous — as well as software we wish we didn’t have to update, app launchers we use on our Macs, and the [apparently?] surging popularity of ATProtocol.

Nearly two years ago now, our family replaced our beloved 2017 Volvo XC90 with a 2024 Volvo XC90. The original XC90 had a catastrophic mechanical failure, due to reasons beyond its control[1]. This led to our insurance company totaling the car.

When seeking a replacement for Erin, we initially thought we’d get a slightly-newer version of the same car, but quickly discovered two truths of July 2024:

- The used market for Volvo XC90s was not very robust

- Our XC90 was the “beater” trim, but was really well-optioned, which meant finding a like replacement was like finding a unicorn

Before long, with the knowledge that time was not on our side, we realized it’d probably be better just to get a new XC90. Furthermore, at the time, there were really great financial incentives for the plug-in hybrid model, so that’s what we bought: a 2024 Volvo XC90 Recharge.

In the last couple years, we’ve been extremely happy with the car[2], and I thought it may be useful to share why. Doubly so since it appears PHEVs are taking some lumps right now.

To briefly recap, a plug-in hybrid means that Erin’s car has both a traditional internal combustion engine, as well as an electric motor. However, unlike traditional hybrids that have been around for twenty years, hers can be plugged in using the same connector full-electric cars use.

Unlike a full-electric car, her car gets only around 30 miles (50km) of range on a full charge. (And, surprisingly, it still takes around 8 hours to charge from empty.) After that, the ICE has to take over.

We live in the suburbs of Richmond, Virginia; this means that to do anything we need to drive. However, we’re lucky enough to live close to darn near everything, and as such, we rarely need to go more than about 5 miles to get to a store/appointment/etc.

Generally speaking, Erin will run a couple errands every day; it’s quite rare she cannot do this within one charge of the battery. Further, if she has both morning and afternoon errands, she can usually top the battery enough in the interim to make it all work on just electricity.

When we go to tailgates in the fall, we can usually make it roughly ⅔ of the way there on battery alone. For the remainder, the car automatically and unobtrusively switches to gasoline.

For vacations in which we take the car — usually a beach between 2-5 hours away — we just… drive the car. The battery depletes quickly, but then we just use gasoline. As we’ve been doing since we started driving in the late 1990s.

For us, the car really is no-compromise.

In fact, since we took delivery of the car in July of 2024, we have put roughly 12,000 miles (20k km) on the car, and have put in only 120 gallons of gas, across only 12 fill-ups. While it’s not at all a fair metric, the car’s effective lifetime fuel economy is 90.9 MPG.

The best tank of gas we got was effectively 469.3 MPG. That is not a typo.

Her last car’s lifetime MPG — across 7 years — was 19.1 MPG.

Our use case is unique, in that Erin has no regular commute, and we live very close to darn near anywhere we need to go frequently. However, for people in a similar situation to ours, I cannot recommend a PHEV enough.

The argument could absolutely be made that, in buying a car that has any internal combustion engine, we’re optimizing for the wrong thing. We could just rent an ICE car for the vacations, and have a BEV for the other 99% of our trips.

On the surface, that’s not wrong, but Erin buying a BEV wouldn’t really improve anything for us. That’s the whole point I’m trying to drive at with this post: her car is effectively a BEV for 95% of our trips with it.

My car, however, absolutely should be a BEV. I just can’t bear to part with it…

When I was in Cupertino for WWDC 2024, I got a phone call as I was in Apple Park waiting for the keynote. Erin knew full well that I was extremely busy, so this call was immediately ominous.

She was calling for our AAA number, so she could get a tow. Her car had died, on the side of the road, on the way back from picking up our daughter from summer camp.

As we later pieced together, a pebble was kicked up into the engine bay, which lodged itself in one of the tensioners for the main serpentine belt. This shredded the belt, which then punched a hole in the timing belt cover. The timing belt then got destroyed, and this led to the engine suddenly becoming an interference engine.

The engine was deemed a total loss, and that led to insurance calling the car a total loss.

We ended up going to the Volvo dealer to clear out our personal effects on our seventeenth wedding anniversary. ↩

While we mostly really like the car, it falls down when it comes to the electronics. AAOS is… okay. Erin is a huge SiriusXM fan, and the app in this Volvo is far worse than the one in her prior car’s Sensus infotainment. Unrelated to AAOS, the proximity key loves to take 5+ tries to actually work — even after a battery replacement. Most alarmingly, the car’s rear collision avoidance absolutely loves to stand on the brakes on Erin’s behalf as she reverses up our driveway. ↩

Last week, I joined Leander Kahney, Lewis Wallace, and D. Griffin Jones on last week’s Cult of Mac Podcast. On the episode, we discussed some news from the week, I did a [mostly successful] walkthrough of Callsheet, and then we played a little prediction game that Griffin put together for this week’s announcements.

In an unusual turn of events for me, we did the whole episode on video, so you can also watch on YouTube.

I had a lot of fun on the show, and even ended up steering us into having an after-show, which is a Cult of Mac Podcast first. (It was 🎵 accidental 🎵.)

Passwords are dumb.

I’m sorry, Ricky, but this isn’t a post about passkeys.

Instead, it’s a post about OpenID Connect, and more specifically,

tsidp. But, much like a recipe found online, we’ll get there.

Over the last several years, I’ve recently become more and more of a “homelabber”. Your definition may vary, but to me, it means that I’m running more and more server or server-like applications and devices in my house.

Over the last couple weeks, I’ve made some significant changes to the hardware in my homelab, which has encouraged me to reevaluate some of the software choices I’ve made as well.

I may talk about this more here, and will certainly talk about it on ATP at some point, but the short-short is that I “repatriated” a NUC that I had stationed at a friend’s in Connecticut so that I could slurp up TV to watch Giants games. Once it was back in the house, I installed Proxmox (new to me!) and… things snowballed fast.

Last night, I was somehow reminded of a video by my friend Alex. In it, Alex explains that, for Tailscale users like myself, you’ve already established who you are, as long as you’re logged into your tailnet. Why not leverage that known identity for authorization?[1]

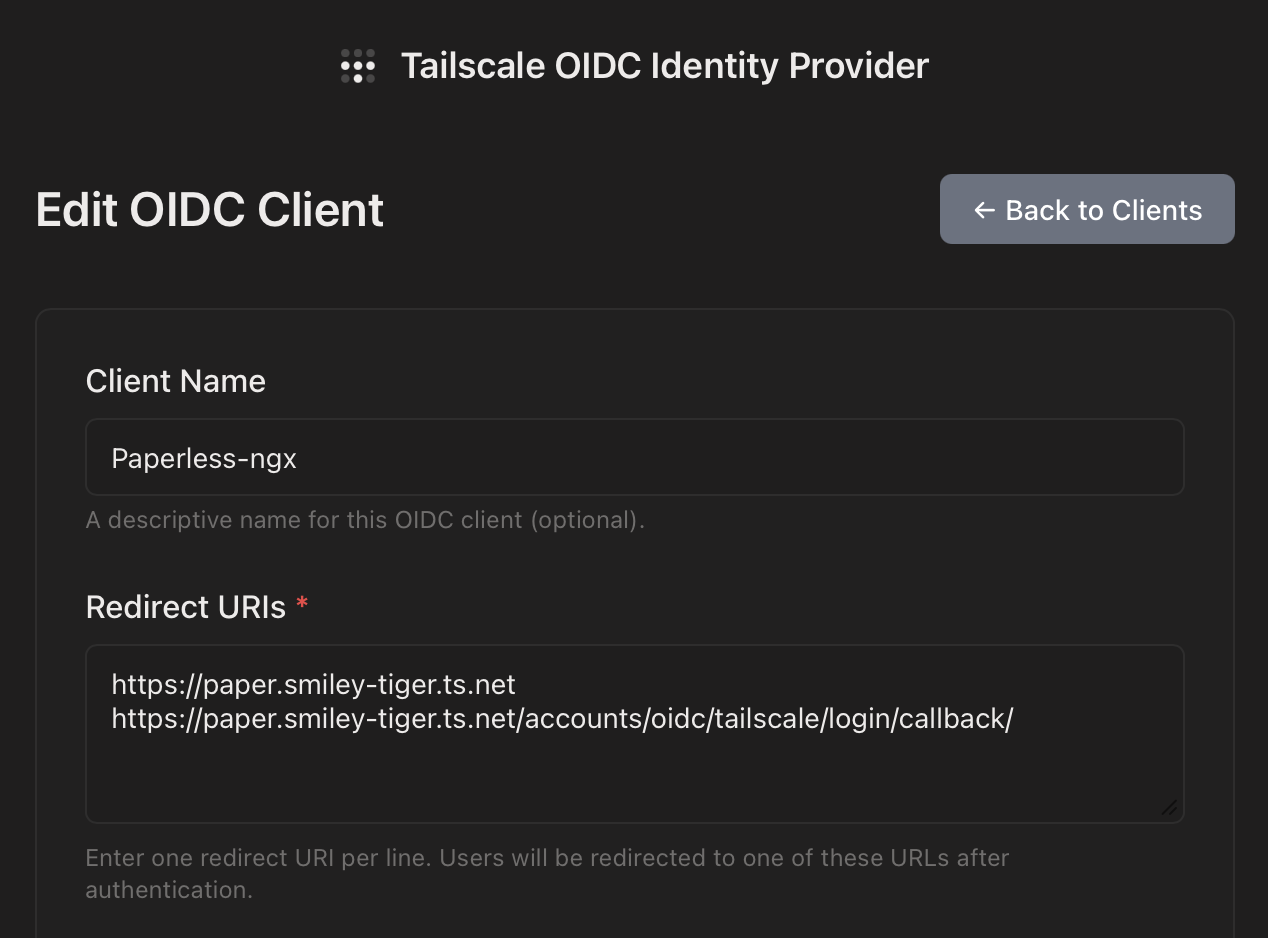

Enter tsidp. This is a very small and straightforward OIDC

identity provider. Said differently, your applications in your

homelab can ask “who is this person?”, and tsidp can answer.

At that point, that account’s permissions are controlled within

the app in question.

I started down this road with Proxmox, which was very straightforward, since Alex covered specifically that in his video. I followed Proxmox with trying to figure out where else I could leverage OIDC, and found that Portainer supports it as well. As does Paperless-ngx. Both of these were a touch squishy to get configured properly, so I thought I’d share how I got them working here.

I’ll assume you can handle the tsidp side on your own; I’m

concentrating on just the configuration on this side for this post.

Portainer

Let’s assume that your Tailnet name is smiley-tiger.ts.net, and

you’ve set up tsidp at idp.smiley-tiger.ts.net. Naturally, you’ll

need to change these for the particulars of your install/tailnet.

In Settings → Authentication:

- Select

OAuthas yourAuthentication method - Turn on

Use SSO. I recommend leavingHide internal authentication promptoff, for safety’s sake - Leave defaults until you get to the

Provider, where you’ll selectCustom. - For the OAuth Configuration, use the following. For URLS, note that trailing slashes are not used:

- Client ID and Client secret from

tsidp - Authorization URL

https://idp.smiley-tiger.ts.net/authorize - Access token URL

https://idp.smiley-tiger.ts.net/token - Resource URL

https://idp.smiley-tiger.ts.net/userinfo - Redirect URL

Your Portainer URL, such ashttps://portainer.smiley-tiger.ts.net - User identifier

preferred_username - Scopes

email openid profile

- Client ID and Client secret from

After you set all those settings, you should be able to connect with

tsidp by simply tapping the large Login with OAuth button.

Note that, as usual, you’ll likely need to bless the account you just created with privileges using your previous, internally authorized, account.

Paperless-ngx

This one was quite a bit less straightforward. Previously unbeknownst to me, Paperless runs on Django, so the configuration for OIDC is basically pulled from there.

To configure this in Paperless, you need to set some environment

variables. I’m using Docker Compose, so in my environment

clause, I added the following:

environment:

PAPERLESS_SOCIALACCOUNT_PROVIDERS: >

{

"openid_connect": {

"APPS": [

{

"provider_id": "tailscale",

"name": "Tailscale",

"client_id": "{{YOUR_CLIENT_ID}}",

"secret": "{{YOUR_SECRET}}",

"settings": {

"server_url": "https://idp.smiley-tiger.ts.net"

}

}

]

}

}

After doing so, and setting things up in tsidp as I had before,

I was faced with errors on the tsidp side, about a mismatch

callback URL. After much trial and error, I discovered I needed

to add another valid URL to tsidp. This is how I ended up:

The key here was the second URL:

https://paper.smiley-tiger.ts.net/accounts/oidc/tailscale/login/callback/

Note a couple things about this URL:

- The

tailscaleyou see there is theprovider_idyou specified in the environment variable above - You actually do need the trailing slash in this case

Once I had that set, I was able to log in. As with Proxmox and Portainer, I then had to bless that new user with Administrative privileges, and then I was off to the races.

The Future

At this point, I think I’ve enabled OIDC for everything I can. There’s a suite of other apps I’d love to use OIDC for, but apparently it’s not supported yet. (IYKYK)

That said, I can now log into Proxmox, Portainer, and Paperless-ngx — all the P’s! — without having to enter a password. And that’s pretty rad.

I’m no security professional, and it wouldn’t surprise me if I’m getting these terms slightly wrong. Just roll with me here. ↩

Since becoming afflicted — becoming a Home Assistant user — I’ve shown the same symptoms as this illness always does: I want to automate everything.

My home automation journey started during the pandemic, with me wanting to have a LED light that illuminated in our bedroom when the garage door was open. This involved a Rube Goldberg contraption involving two Raspberry Pis, a contact sensor, and some custom Python. After the project was over, I had a LED light attached to our headboard that would only illuminate when the garage door was open. It’s saved us from leaving the door open overnight a handful of times.[1]

In the ensuing years, I have wanted to find a similar, but slightly more general-purpose solution. There are some other state-of-the-house things I’d like to monitor, and I’d like to do so in a more central place in the house. Given our house was built in the late 90s, we have RJ11 coming out of one of the walls in the kitchen at nearly eye level. I had tried to convince Erin to let me repurpose that — my vision was to drill a trio of LEDs into a blank faceplate, stick something behind it, and call it a day.

Unfortunately, Erin has taste, and gently led me to the conclusion this would be… a bit of an eyesore. So I had to re-evaluate.

Around this time, I was lamenting this problem on ATP, and a listener pointed me to these now-discontinued HomeSeer switches. At a glance, this is a standard Decora-style dimmer that can be controlled via ZWave. However, upon closer inspection, there are seven LEDs on the left-hand side. Even more interestingly, you can put the switch in “status mode”, which means each of the LEDs can be individually controlled.

And just to put icing on the cake, the listener had an extra that they were willing to send me. Thank you, again, Chris R. 🥰

Once it arrived, I had to teach myself ZWave, and figure out how to get the Homeseer into “status mode”. However, once I did, it was pretty quick and astonishingly easy to get Home Assistant talking to not only the dimmer, but the LEDs as well.

So, here’s where I ended up:

The image is under-exposed on purpose, and the colors are a touch off in this photo, but I assure you they’re vivid but not attention-grabbing when seen in person.

The center switch actually controls the lights above our kitchen table. But for me, it’s the home status board. Here’s what each of the lights indicates, from top to bottom:

| LED Color | Purpose |

|---|---|

| None | Not currently used |

| Green | The laundry needs attention |

| Blue | The mail is waiting |

| None | Not currently used |

| White | The Volvo is charging |

| Magenta | The shed door is open |

| Red | The garage door is open |

The steady/all-good state of the status board is for all of the LEDs to be extinguished. If any of them is on, it doesn’t necessarily mean that something needs attention, but it may.

The green LED will flash while the washer or dryer are running, and remain lit when clothes need to be moved.[2]

The blue LED will illuminate if the mailbox has been opened for the first time in the day, and if that opening happened after 10 AM. We almost always place outgoing mail in the mailbox first-thing in the morning, and the mail almost never comes before dinnertime. A LoRa contact sensor is what’s feeding this.

The white LED will illuminate as long as Erin’s plug-in hybrid is actively charging. Home Assistant has an integration for Volvo.

The magenta LED will illuminate if the shed door is open. Here again, another LoRa contact sensor.

The red LED will illuminate when the garage door is open, and it will flash while it is raising or lowering. Home Assistant has an integration for our weird garage door opener.

This status board is, for any reasonable human, lunacy. None of this is particularly necessary, and I could make a strong argument that most of it isn’t particularly helpful. For example, the distance between the red “the garage is open” LED and the doorway to the garage is approximately three paces.

That said, I have come to quite like having it in the kitchen, where I naturally pass by it many times daily, to be able to see the state of some critical systems in the house. The kids have, slowly, started to learn what LED means what.

Erin could not possibly care less. She’s just happy not to have the eyesore I originally proposed.

Over the years, the way the LED works has changed. It’s still powered by a Raspberry Pi, but instead of some bespoke communication over UDP, it listens for a topic on my local MQTT server, and will illuminate based on that. Currently, Home Assistant will tell it to illuminate if the shed or the garage are open. ↩

We have a natural gas dryer, and thus it uses a standard 110V plug. I put the washer and dryer on their own Shelly plugs, and Home Assistant monitors their power usage. When the washer’s power draw falls below a few hundred watts for a full minute, it is assumed the clothes are waiting to be moved to the dryer.

When the dryer starts drawing a few hundred watts, it is assumed the clothes are drying.

When the dryer is drawing a low wattage, it’s assumed the clothes have been removed. The low wattage is because it’s powering its internal light.

This system isn’t 100% perfect, but it’s been surprisingly accurate. ↩

A few years ago, AT&T

sunset

sunset our then-current plan, and aggressively forced us to switch to a different,

more modern plan. Naturally, that more modern plan just happened to be

quite a lot more money.

our then-current plan, and aggressively forced us to switch to a different,

more modern plan. Naturally, that more modern plan just happened to be

quite a lot more money.

🙄

We figured we could save money by pairing our Internet & TV service with our mobile service. We switched from AT&T → Verizon. At the time, we were saving a ton of money. In fact, since we didn’t get new phones as part of the deal, we got something like $1000 in credits that we used to pay for service for the better part of a year.

Over the years, the cost of both our home and mobile plans have ratcheted up, to the point that we’re now paying ~$135/mo (❗️) for Internet and TV; we’re paying roughly $185/mo (‼️) for two phones and an iPad. The bills are too damn high.

A few listeners — apropos of me seeking a pay-as-you-go wireless hotspot[1] — pointed me to US Mobile. They are a MVNO that actually works with all three major networks[2]. Allegedly they’re easy to work with, which is to say they have a modern and easily navigable website.

More importantly, however, they offer darn near all the same stuff as the big three do, but for way less money. Their most expensive plan, before add-ons, is $32.50 per month.

I decided I was going to give them a shot, because I should be able to save

at least $30/month by switching.[3] So, I went to Verizon’s website, and

tried to find the place where I could start the number porting process. I

couldn’t find it quickly, so I searched for port number.

And I’ll be damned, but once I saw the results of my search, right there at the top, just below the search box, was an offer to save $20 per month per line. Just because.

So I clicked it. It didn’t work, because Verizon’s website is trash, but it did offer a link to a place I hadn’t previously found, where I had several offers. All of them had catches of some sort — get a new phone for free and commit to a new two-year agreement and so on.

But sure enough, there was a $20/mo/line discount offered. I clicked on it, verified I wanted it on both Erin’s and my lines, and that was that. In theory, our bill next month will be $145, rather than $185.

So, if you’re out of a long-term contract with your carrier, and are just riding month-to-month, it may be worth trying the same thing.

I get that it’s not exactly in Verizon’s best interests to give me the best deal they possibly can. I get that they’re a business designed to extract money from my wallet, so they can place it in theirs. But still, this felt gross. It was only when they [rightly!] detected they were in the midst of a potential case of churn that they suddenly found a way to lower my bill.

I hate everything, at the moment. But right now I really hate capitalism.

Well, and ICE. Screw those lawless chodes.

USMobile doesn’t really fit the bill here, but if you have any tips, I’m all ears. Simo seems to be exactly what I’m seeking, but I’ve heard both good and bad. ↩

Interestingly, with some restrictions/cost depending on your particular plan, you can swap between networks. Suppose when you sign up, you’re on Verizon, but you later move and AT&T has better coverage, you can do that. All while staying a USMobile customer. Or, for a monthly fee, have your phone ride on two networks, for better coverage. ↩

Our Verizon situation comes with discounts for having all three services with them. We also currently get “free” Disney+ and ESPN+, which would need to be paid for directly in the future. Additionally, we pay $10/mo for the cellular iPad, and that would need to be accounted for as well. ↩

The last couple weeks have been really busy, so I didn’t get a chance to post about my appearance on Downstream. As discussed on ATP, it sounds like my time with a CableCARD-powered HDHomeRun is likely coming to an end.

On this episode of Downstream, Jason and I talked a bit about Callsheet, and then he helped guide me through the process of figuring out what comes after Verizon shifts to IPTV and retires their CableCARDs. It was, selfishly, extremely useful; however, I think it also serves as a good model for figuring out which of the myriad of TV streaming services is the best fit for you.